April 2, 2026

What +100K Stars in 24 Hours Actually Tells Us About AI Coding

A leaked codebase became the fastest-growing GitHub repo anyone's seen. The hype is loud. But underneath it are real patterns worth paying attention to: orchestration harnesses, verification loops and context discipline.

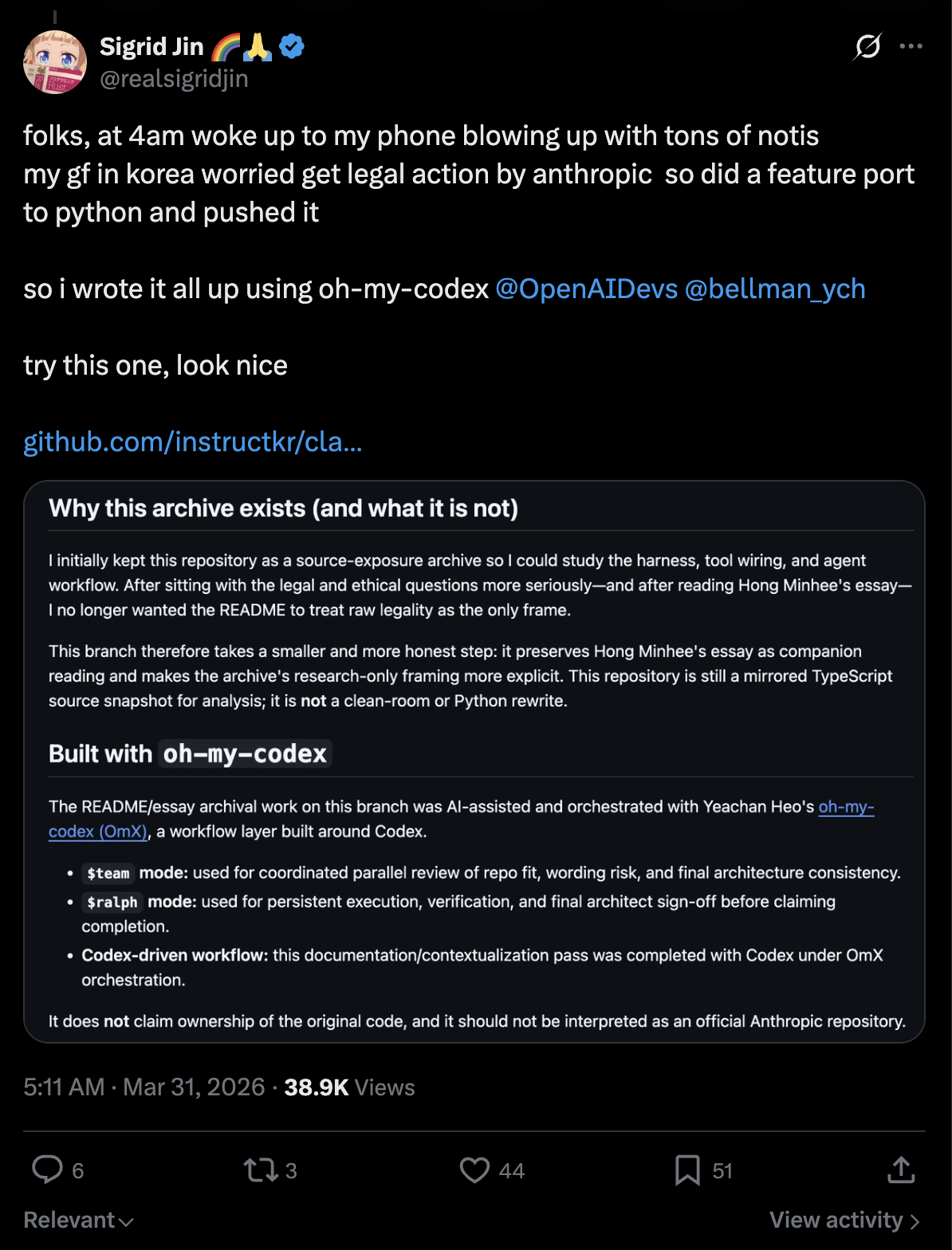

Two days ago I wrote about Claude Code’s source leaking via npm. The leaked code got archived, forked and within hours a clean-room reimplementation appeared at ultraworkers/claw-code. It went from zero to over 100,000 stars in under 24 hours. The repo itself claims to be “the fastest repo in history to surpass 100K stars.” I haven’t seen an official GitHub certification of that, but it’s plausible — I watched it gain a thousand stars every ten minutes during a livestream.

That’s the event. But there are three separate things happening here and they keep getting blended together: the actual incident, the technical substance behind the tools that built it, and the social hype machine that propelled it. Worth separating them.

The event

Anthropic confirmed the source exposure was a packaging error — source maps shipped in the npm bundle pointing to an unprotected R2 bucket. Half a million lines of TypeScript, no model weights, no customer data. I covered the details in the previous post.

The reimplementation was led by two Korean developers, Sigrid and Bellman. From what I’ve seen in Ray’s video and their public posts: Sigrid is a former Ethereum validator operator, Bellman is an ex-quant trader who now builds autonomous coding systems. They used their own agentic tooling — oh-my-codex and oh-my-claudecode — to scaffold a Rust workspace and Python port in a matter of hours.

The repo describes itself as a clean-room reimplementation that “does not claim ownership of the original code” and states it’s not affiliated with Anthropic. That said, Anthropic reportedly issued takedowns against copies of the leaked source. Clean-room reimplementation is a more defensible position than hosting leaked code directly, but the whole area is obviously in a gray and contested zone right now.

The hype

Let’s be honest about what’s driving the star count. This repo rode six waves simultaneously: a spectacular leak, a clean-room narrative, an anti-big-tech angle, livestreams, X posts and a “history in real time” framing. That doesn’t mean the project lacks substance. But it does mean star count here is a very noisy measure of actual product maturity.

Over 100,000 forks almost immediately underscores how much of this is social distribution and mirroring, not traditional open-source adoption.

GitHub stars in 2026 don’t measure what they used to. In the AI scene a star also measures narrative, timing, memetics, conflict and social distribution. This repo scored on all of them at once.

Some of the details circulating — 2 billion tokens burned per day, coding from an airplane via text messages, five simultaneous Codex Pro subscriptions — may well be real. But they’re also self-reported and perfectly shaped for virality. I’ll note them because they paint the picture, but I’d weight the technical patterns more heavily than the performance metrics.

The substance

Strip away 90 percent of the show and three design principles emerge. These are the parts worth paying attention to because they hold up regardless of how you feel about the hype.

1. Verification loops over single-shot prompts

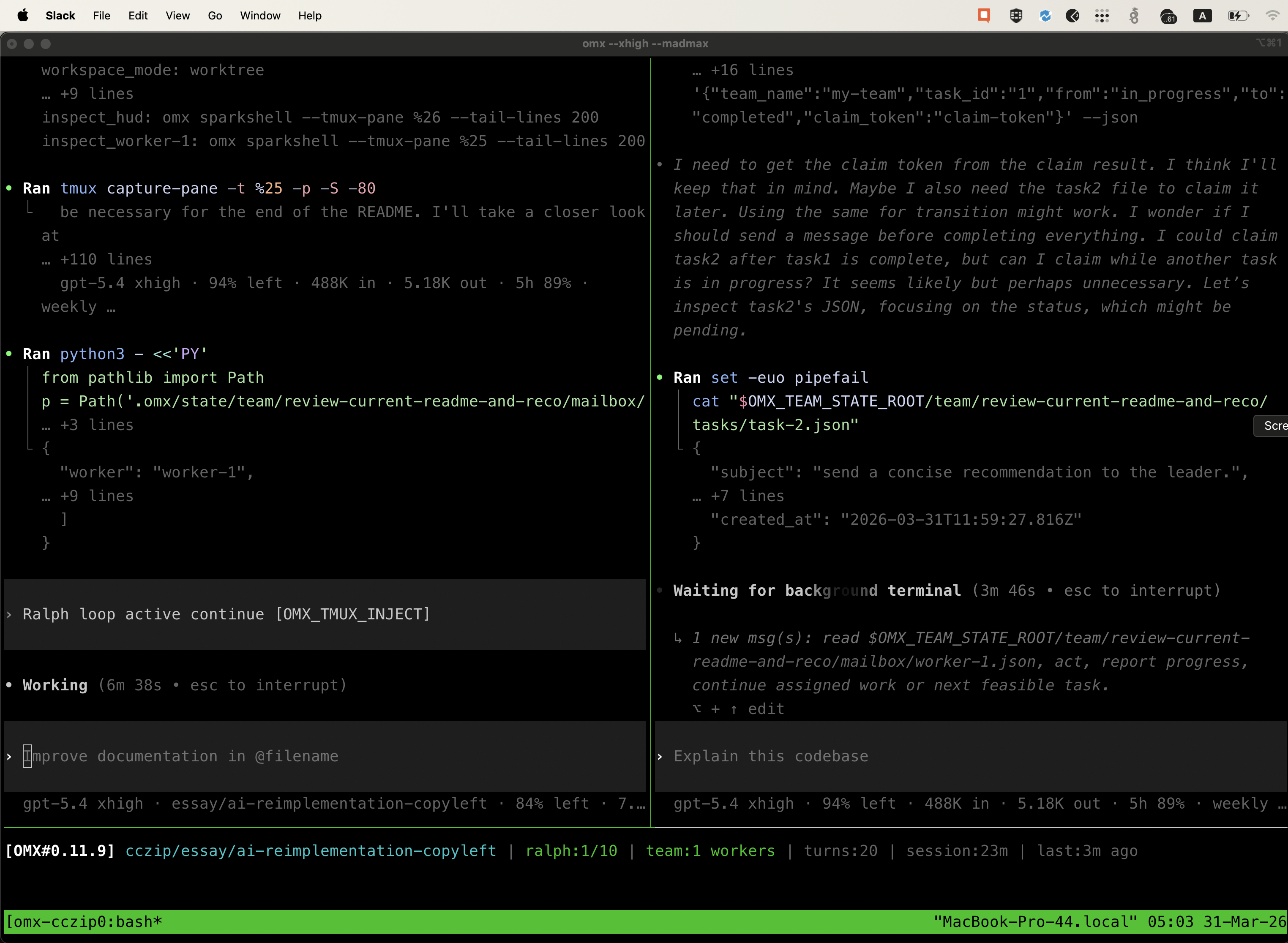

The “Ralph” pattern — implement, verify, fix, re-verify in a persistent loop — is fundamentally different from how most people use AI coding tools. Most people prompt, review, manually fix, prompt again. Ralph automates the entire cycle. The agent doesn’t stop until the task passes verification.

This isn’t a new idea in software engineering. CI/CD does the same thing. But applying it inside the agent’s own execution loop — making the agent responsible for its own QA before surfacing results — is a meaningful shift from treating LLMs as chat interfaces.

2. Small and composable skills

Bellman keeps skill files under 50-70 lines. His take: “Most skills are entirely slop.” A skill is a pointer with intent. The intelligence comes from the agent and the orchestration, not from stuffing a prompt with instructions.

This is the opposite of the “superprompt” approach. Instead of one massive system prompt trying to cover everything, you have small modular skills that chain together. The harness decides which skills to inject based on the task. Context stays lean.

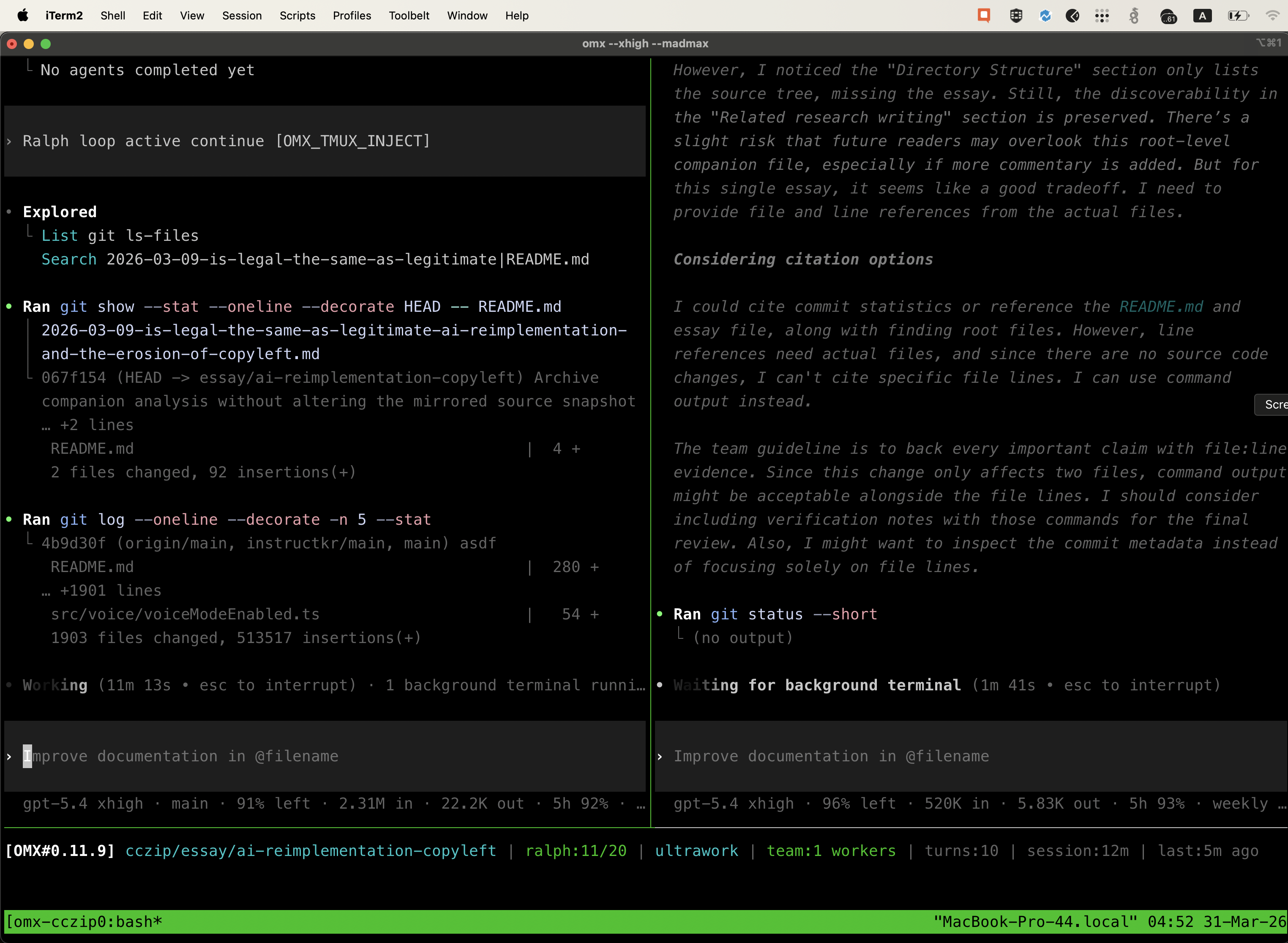

3. Context discipline through pointers

Everything is a file reference. Skills point to other skills and files. Agents don’t hold full context — they reference paths. This prevents the bloat that kills long-running sessions.

Basic computer science. Memory heaps and pointers. Nothing novel, just applied correctly to a problem most people solve by cramming everything into the prompt.

The tools

Three repos implement these patterns. Each one solves a different layer.

oh-my-claudecode (OMC)

21,700 stars. Multi-agent orchestration plugin for Claude Code with 19 specialized agents, 28 skills and MCP-powered tools. The tagline is “a weapon, not a tool.”

It runs a staged team pipeline — plan, PRD, execute, verify, fix loop — across coordinated Claude agents in tmux panes. Smart model routing auto-delegates to Haiku for simple tasks and Opus for complex reasoning. Includes a built-in “AI slop cleaner” skill that strips dead code and unnecessary abstractions.

oh-my-codex (OMX)

10,000+ stars. Same philosophy applied to OpenAI’s Codex CLI. This is what actually built claw-code. It wraps Codex sessions in tmux with custom HUDs, teammate panes and agentic swarms. Sub-agents auto-route model selection and reasoning intensity based on task complexity.

ClawHip

Daemon-first notification router written in Rust. Monitors Git commits, GitHub issues, tmux sessions and custom events. Routes notifications to Discord and Slack through a typed event pipeline. The key design choice: it bypasses gateway sessions to avoid polluting agent context with notification noise. Your routing stays separate from your agent’s reasoning.

What actually matters here

The competitive advantage is shifting from “which model do you use?” to “how does your harness and orchestration work?” That’s not marketing. oh-my-claudecode openly describes a team-first pipeline with plan, execution, verify and fix loops. oh-my-codex sells the same idea for Codex: hooks, agent teams, HUDs and multi-layer workflows around the model rather than just a raw chat session.

The model is a commodity. If you have a Codex or Claude plan you have access to the same intelligence as everyone else. What remains is how you orchestrate it.

Look less at the drama around leaks and stars. Look more at the patterns: plan → execute → verify → fix, small skills, file pointers instead of prompt novels, and separate event routing for notifications and long-running jobs. That’s where the actual lesson is.